Some time ago, I set up an elaborate A/B test on subject lines. I liked “How 1.75 Billion Mobile Users See Your Website” and my client manager liked “Business Cards Are No Longer the First Impression.” We learned long ago not to be a focus group of one—um, two—but our testing also proves something else I’ve been saying for years — A/B tests do not stand alone.

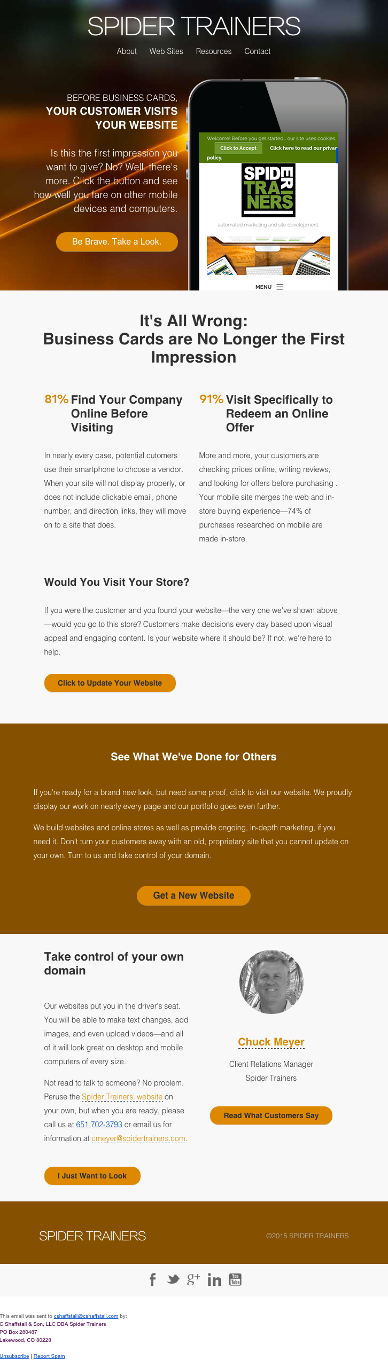

For the Mobile Users campaign, we used dynamic personalization and dropped in an actual screenshot of each recipient’s website as viewed on a modern smartphone. We knew this distinctly personal touch could add a sizeable bump to engagement. It’s one thing to tell a recipient their website looks awful on a phone; it’s another thing to show them.

For the Mobile Users campaign, we used dynamic personalization and dropped in an actual screenshot of each recipient’s website as viewed on a modern smartphone. We knew this distinctly personal touch could add a sizeable bump to engagement. It’s one thing to tell a recipient their website looks awful on a phone; it’s another thing to show them.

At the end of the campaign, we will have sent fewer than 10,000 emails, but before we get to the balance, we felt it was important to know which of the two subject lines would perform better. Marketing operations always wants the best chance of success for their marketing team’s campaigns, so this was a necessary step to ensure our subject line would elicit a higher open rate.

For the initial test, we sent 600 emails, half to each subject line. One line won on opens; the other won on clicks to the form. Now we had a better question: is it better to get more people to open and see the message, or fewer people to open but with expectations set so accurately that they click?

The open rate differed by more than 10%, and the CTR by about 2%.

Should I have stopped there and answered only the question I started with (which subject line should we use), or should I look at other factors to improve overall success?

The problem I see with many A/B tests is that marketers ask one question, answer it, and move on. Many email-automation tools even encourage this: send two subject lines; whichever wins, use it for the rest. But what about open rate and CTR combined? In this case (and many others), that’s far more important. One step further: what about open rate, CTR, and form completion rate combined? Now we’re onto something.

There were many factors to consider: time of day, past engagement, lifecycle stage, and more. The subject line was a good place to start, but I couldn’t ignore what I had learned from other campaigns.

This is the hardest part of testing — whether you’re using A/B or multivariate — isolating what you’ve actually learned. That typically means I don’t analyze just the single campaign, I aggregate.

For this campaign, I took our test results and compared them against prior campaign data and looked for patterns. We’ve all read that Thursday mornings are good for sending emails (as one example), but did that hold true for my list? Were open rates affected by time of day, date, day of week, business type, B2C vs. B2B? These are analytics we tracked because we had found each influences open rate.

So yes, we learned which of the two subject lines performed better for opens. We also learned that repeating the test to another 600 recipients on Tuesday morning — instead of Thursday morning — produced almost exactly the opposite result.

A/B tests can be hard. If they were easy, everyone would do them. Our simple one-time test wasn’t enough to make campaign decisions. It took more testing to prove or disprove our theories, and it took aggregating the data with other results to paint the full picture.

We did find a winner: an email with a strong subject line to earn the open, clear presentation of supporting information inside, a path that led recipients to a form they actually completed — all sent on the right day at the right time, from the right sender. Of course, it was “How 1.75 Billion Mobile Users See Your Website.” People can resist big numbers.

While you’re not privy to all of the data we have, on the subject lines alone, which do you prefer?

Amusingly, when I used ChatGPT to review this previously published article for content updates, it responded to the question. Here is AI’s take on our two subject line candidates:

“My pick: How 1.75 Billion Mobile Users See Your Website

It aligns directly with the in-email proof (the mobile screenshot), sets a clear expectation, and typically attracts the right intent for a mobile-readiness offer. If the form and landing experience reinforce that promise, it’s the safer bet.”

Editor’s note: I wrote this article some time ago — but the principle hasn’t changed. This lightly updated version reflects current tools and practices while keeping the same lessons intact.

AI disclosure: This content was originally written by me and later updated with assistance from OpenAI’s GPT-5 for light editing, fact-checking, and modernization. Every word has been reviewed and approved by a human — specifically, me — before publication.